Don't Lie. But if you do, only lie once.

Dear Magicians,

Two excuses is a lie. One might be true. Two means you’re negotiating with reality.

We instinctively pile on reasons the way a bad lawyer piles on objections—hoping quantity creates conviction. It doesn’t. It creates doubt. Each extra excuse signals that the first one couldn’t carry the weight alone. So you bring reinforcements. And the moment you do, the listener stops asking, “Is this true?” and starts asking, “Why are they trying so hard to make it sound true?”

I see this all the time from students: “My cat died. My grandmother had her Bat Mitzvah.” Or my favorite: “My iPhone updated itself and my alarm got deleted.” My man, class is at noon!

By the second sentence, I’m no longer tracking the story—I’m tracking the pattern. One of those might be true. All three together feel engineered.

If a single excuse is sufficient, adding more doesn’t strengthen your case. It weakens it. Substantially.

There’s a quiet asymmetry here: one clean reason feels like evidence. Multiple reasons feel like strategy. The mind doesn’t average them—it defaults to the weakest. Like a chain, your explanation breaks at its thinnest link, not its strongest.

Writer and computer scientist Gurwinder Bhogal captured it perfectly: “We assume that the more arguments we give, the better our case. In reality, our weakest arguments dilute the strongest…”

One reason. Or none.

Until next time, have a M.A.G.I.C. Week,

Brian

P.S. Read all my Musings on Substack

P.P.S. 📷Check out Ad-free episodes on Patreon: patreon.com/drbriankeating

Appearance

I went down a rabbit hole in this conversation with Julian Dorey—tackling how truth gets distorted in science, why debates break down, and what happens when evidence takes a backseat to narrative. I’ll admit, I changed my mind more than once while recording this… watch it and tell me where you land—what would actually change your mind?

Genius

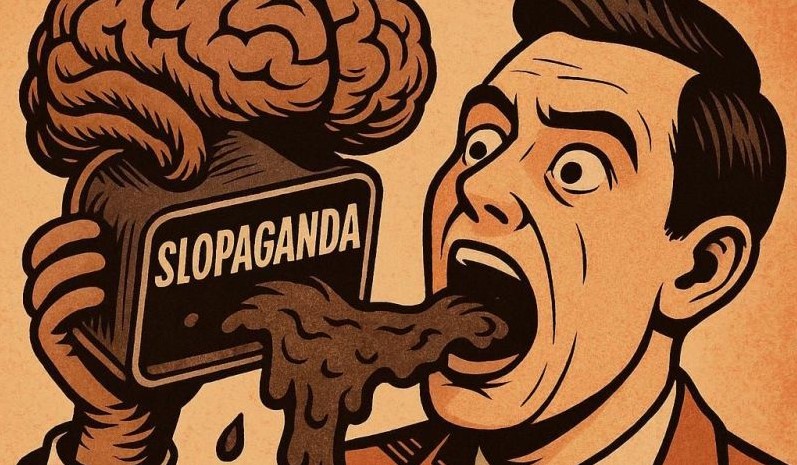

🤖 Slopaganda

Propaganda used to require intent. Someone had to want to deceive you.

A 2025 arxiv paper coined “slopaganda” — AI-generated misinformation produced without deliberate deception. No propagandist needed. Flood the information ecosystem with plausible-sounding content faster than anyone can fact-check it, and the effect on epistemic infrastructure is identical to deliberate disinformation — maybe worse. At least you can understand a liar’s motives.

We built robust defenses against motivated deceivers. We have almost no institutional immune system for well-intentioned, automated epistemic noise. The problem isn’t bad actors. It’s the economics of generating attention at near-zero marginal cost.

The most dangerous information environment isn’t one where people lie to you. It’s one where nobody’s lying, everyone’s wrong, and the volume is infinite.

Your news diet is already downstream of this. The question is whether you know it.

Image

A few times a year, Elon Musk replies to one my X posts (aka Tweets). I never know the reason why. Sometimes it’s a dad joke. Sometimes a pithy observation about science. Sometimes it’s a snarky observation about a celebrity. This time it was all three. I wondered why Elon replied to me. Not the millions of other voices in the thread—mine. At first, I assumed it was luck. But the more I looked at it, the more it felt like physics, not chance. This time I got to be part of a billionaire sandwich

Marc Andreessen made a sweeping claim. I didn’t argue it head-on. I just nudged it—one line, one example, a small crack in an otherwise smooth surface. Suddenly, that was the spot people looked at. Not because it was louder, but because it changed the shape of the conversation.

Moral: If you want to be heard, don’t add more noise. Change the signal.

Conversation

Latest on Into The Impossible

I just sat down with Emad Mostaque—and he told me something I can’t shake: the biggest AI labs already have models they’ll never release. We dug into why, how reinforcement learning may be killing creativity, and why AI might soon outperform humans in ways that make us… optional. Watch this and tell me—are we building tools, or replacements?

Channel members can watch it a day early — join here.

Advertisement

By popular demand, and for my mental health 😳, I am starting a paid “Office Hours” where you all can connect with me for the low price of $19.99 per hour. I get a lot of requests for coffee, to meet with folks one on one, to read people’s Theories of Everything etc. Due to extreme work overload, I’m only able to engage directly with supporters who show an ongoing commitment to dialogue—which is why I host a monthly Zoom session exclusively for patrons in the $19.99/month tier.

It’s also available for paid Members of my Youtube channel at the Cosmic Office Hours level (also $19.99/month). Join here and see you in my office hours!